Resilient Cyber Newsletter #83

- Chris Hughes from Resilient Cyber <resilientcyber@substack.com>

- Hidden Recipient <hidden@emailshot.io>

Resilient Cyber Newsletter #83Capital, Competition and Cybersecurity, Securing AI Where it Acts, Hacking OpenClaw Hype, 2026 State of Software Supply Chain AI-driven Zero Day Discovery, and OS-level Isolation for AI AgentsWeek of February 3, 2026 Welcome to issue #82 of the Resilient Cyber Newsletter! This week’s Resilient Cyber Newsletter spans the full landscape, from a deep conversation with Carta’s Peter Walker on the capital, competition, and talent dynamics shaping cybersecurity startups and beyond, to mounting evidence that agentic AI risks have crossed from theoretical to operational. Operation Bizarre Bazaar documented over 35,000 LLMjacking attack sessions in 40 days, the OpenClaw/Moltbot saga exposed the fragility of the Personal AI Assistant (PAI) craze, and new research shows AI agents can exploit vulnerabilities for pocket change. The economics of attack and defense are shifting fast. On the building side, there’s real momentum. I shared my talk from the Cloud Security Alliance’s Agentic AI Summit on securing AI where it acts, Sonatype’s 2026 report revealed open source malware has gone industrial with 454,000+ new malicious packages last year, and Cisco open-sourced their Skill Scanner for agent security. From Lakera’s case against LLMs grading their own homework to Sigstore extending provenance to AI agents, the throughline is clear: our security tooling and frameworks need to evolve as fast as the threats they’re meant to address. Let’s dig into what’s been an exceptionally eventful week. Interested in sponsoring an issue of Resilient Cyber? This includes reaching over 31,000 subscribers, ranging from Developers, Engineers, Architects, CISO’s/Security Leaders and Business Executives Reach out below!

Cyber Leadership & Market DynamicsCapital, Competition and Cybersecurity In this episode of Resilient Cyber I sit down with Peter Walker, Head of Insights at Carta.We dove into the dynamic and interesting intersection of Capital, Competition and Cybersecurity. This includes startups, venture capital, talent and AI among more. We covered a lot of topics, including:

Cyera employees to sell tens of millions in shares at $9 billion valuationDuring my conversation with Peter Walker we discussed the concept of buybacks and/or tender offers, a move that lets companies allow potentially new investors or interested parties become shareholders, as well as a way to provide existing staff/employees with a path to liquidity prior to IPO or an M&A event. Ironically, news just came out this week with Cyera as a cyber-specific example of this, with the firm announcing they will allow employees to sell tens of millions of shares, at their current $9B valuation. You can hear the importance of retention and team morale come through from Yotam, Cyera’s CEO below:

The Cyberstarts Employee Liquidity Fund, established by Gili Raanan with a total of $300 million, is designed to support key talent across the fund’s cybersecurity portfolio and enable employees to share in their companies’ success without needing to leave. To me, this is an innovative way to ensure they keep critical talent and allow them financial upside along the journey prior to IPO and/or liquidity events, especially in the highly competitive field of cybersecurity, where talent is always coming at a premium. Marc Andreessen: The real AI boom hasn’t even started yet I enjoyed this interview with Marc Andreessen by Lenny Rachitsky. It was really well done, covering a wide range of topics and really taking the time to dig into them too, versus just quick soundbites or clips. Marc argues the best is yet to come when it comes to AI and its potential for the market, startups and society.

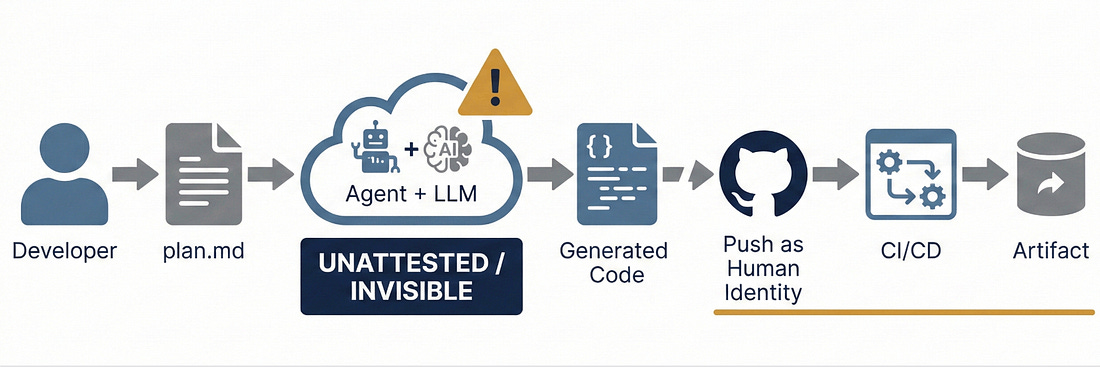

Dallas County Pays $600,000 to Pentesters It Arrested for Authorized TestingThis case has become a cautionary tale in our industry, and it finally reached resolution. Gary DeMercurio and Justin Wynn from Coalfire Labs were hired by Iowa State Court Administration in 2019 to test courthouse security. They found a door propped open, closed it, tripped an alarm, and waited for authorities as protocol dictates. Despite having authorization letters, they were arrested on burglary charges. The $600,000 settlement is a substantial acknowledgment of wrongdoing. What strikes me about this case is how it illustrates the fundamental communication breakdown that can occur even with properly scoped engagements. For anyone running red team or pentest programs, this is a reminder that authorization documentation and stakeholder coordination need to be airtight. Startup Layoff LandscapeIf you’ve been following me, you know I recently had Peter Walker on my Resilient Cyber Show, who’s the head of Insights at Carta. They have data on more than 60,000 startups and he is constantly providing excellent breakdowns on hiring, equity, fundraising and much more. His latest example gave a glimpse into the startup layoff landscape. He shows that while things have been steadily been declining in terms of layoffs heading into 2026, and many now suspect we will see layoffs due to AI, the story is actually more subtle than that. He points out that while some layoffs may be happening due to AI, the real story is that startups are hiring slower, and less aggressively than in the past. This could be driven by increased capabilities with AI, enhanced productivity and gains from using the technology that leads to less need for human labor. Federal Government Ignored Cybersecurity Warning for 13 Years - Now Hackers Are Exploiting the GapThis piece resonates with something I’ve observed repeatedly: government bureaucracy moves methodically but slowly, and in cybersecurity, that pace creates critical gaps. In 2012, a DoD IG report raised concerns about signature-based antivirus limitations. The Senate Armed Services Committee echoed those concerns. More than a decade later, those same reactive defenses are still protecting critical systems while adversaries have leapfrogged with AI and automation. The APT41 spear-phishing campaign targeting trade groups ahead of U.S.-China trade discussions, which evaded detection - is a perfect example of what happens when you’re always one step behind. The author’s call to revise BOD 18-01 is spot-on. Cybersecurity Can Be America’s Secret Weapon in the AI RaceNational Cyber Director Sean Cairncross has been clear: “China is without question the single biggest threat in this domain that we face.” FBI Director Wray describes China as having a bigger hacking program than “every other major nation combined.” What I find compelling about this op-ed is the framing that cybersecurity isn’t just defensive - it’s a competitive advantage. If we can build AI systems that are trustworthy and secure, that becomes a differentiator against adversaries investing heavily in offensive capabilities. The federal government needs to avoid reactive regulation while still ensuring security is built into every stage of AI development. U.S. Pushes Global AI Cybersecurity StandardsThe push for global AI cybersecurity standards is something I’ve been advocating for. When we have fragmented approaches across jurisdictions, it creates gaps that adversaries exploit. The Trump administration’s six-pillar national cybersecurity strategy - covering offense and deterrence, regulatory alignment, workforce, procurement, critical infrastructure, and emerging tech - provides a framework, but execution will be everything. International coordination on AI security standards could be a game-changer if we get it right. Geopolitics in the Age of AI: Foreign Affairs AnalysisThis Foreign Affairs piece provides important geopolitical context for the AI security discussions we’re having. AI isn’t just a technology problem - it’s reshaping international power dynamics. For security practitioners, this means understanding that our work exists within a larger strategic competition. The decisions we make about AI security architecture have implications beyond just protecting individual organizations. AISecuring AI Where it Acts I recently gave a talk at Cloud Security Alliance‘s 2026 Agentic AI Summit last week. I wanted to share that talk here. Folks can also check it out on CSA’s YouTube along with other excellent speakers and topics. I tried to cover a lot of ground, including:

"Ship fast, capture attention, figure out security later"We've heard this story before, and we all know how it ends. Yet, time after time, speed to market, revenue, and in this case, hype, are the incentives that drive behavior and keep security as an afterthought. Another gem from Jamieson O'Reilly exposing the fragility of the entire Personal AI Assistant (PAI) craze around OpenClaw (previously Moltbot and Clawdbot) The OpenClaw hysteria has reached a fever pitch this week. It feels like every single security practitioner or researcher is posting about it, its risks, the implications and more. Here are but a few resources covering OpenClaw and its implications:

Prior to the rebrand, Low Level did an excellent overview of it as well, for those who prefer to watch:  Below are some slides breaking down the hype and architecture.

State of AI in 2026: LLMs, Coding, Scaling Laws, China, Agents, GPUs, AGI | Lex Fridman PodcastIf you want to go really deep into the weeds of models, their evolutions, open vs. closed source and more, I caught a recent episode of the Lex Fridman podcast and it does just that.

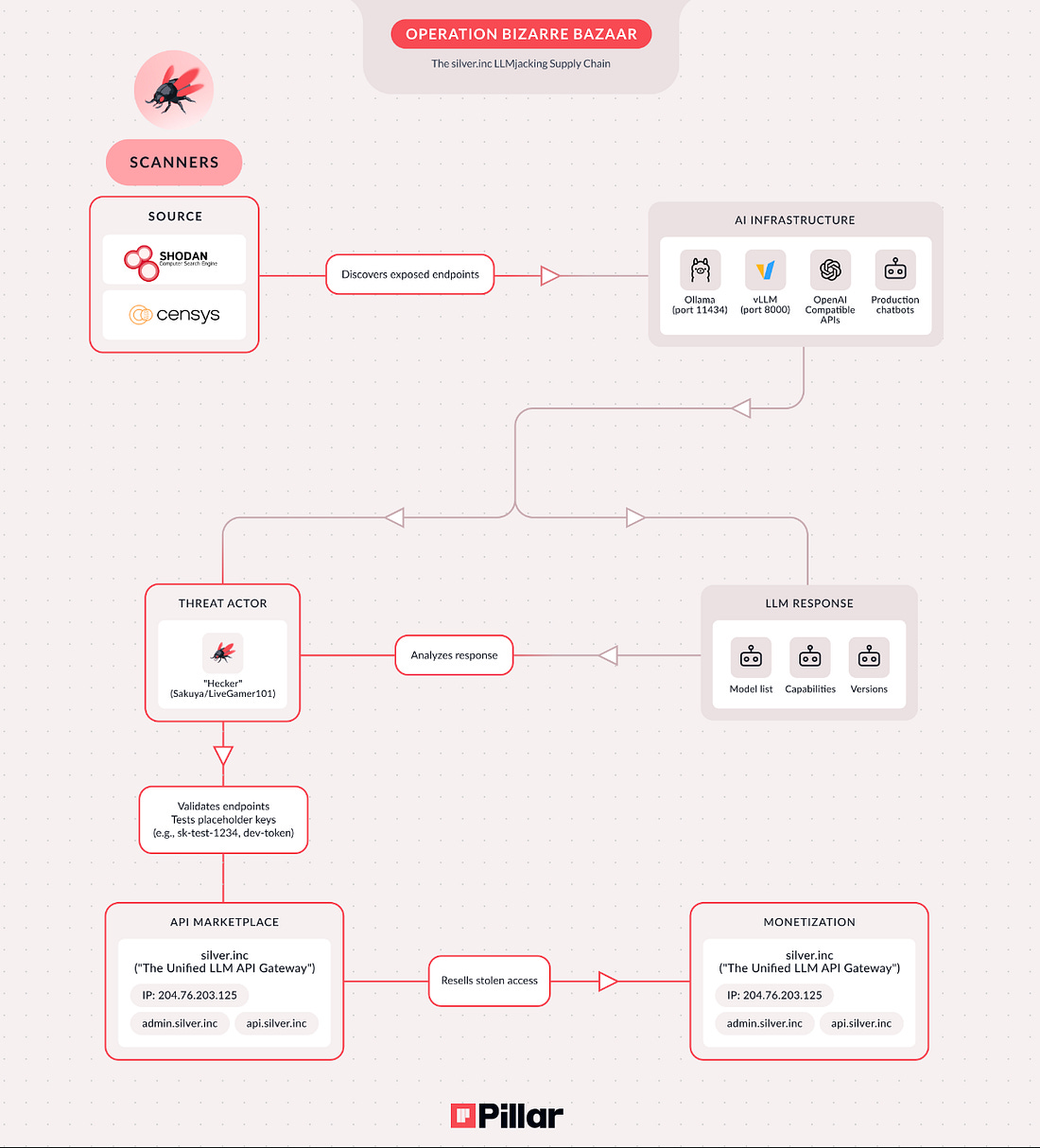

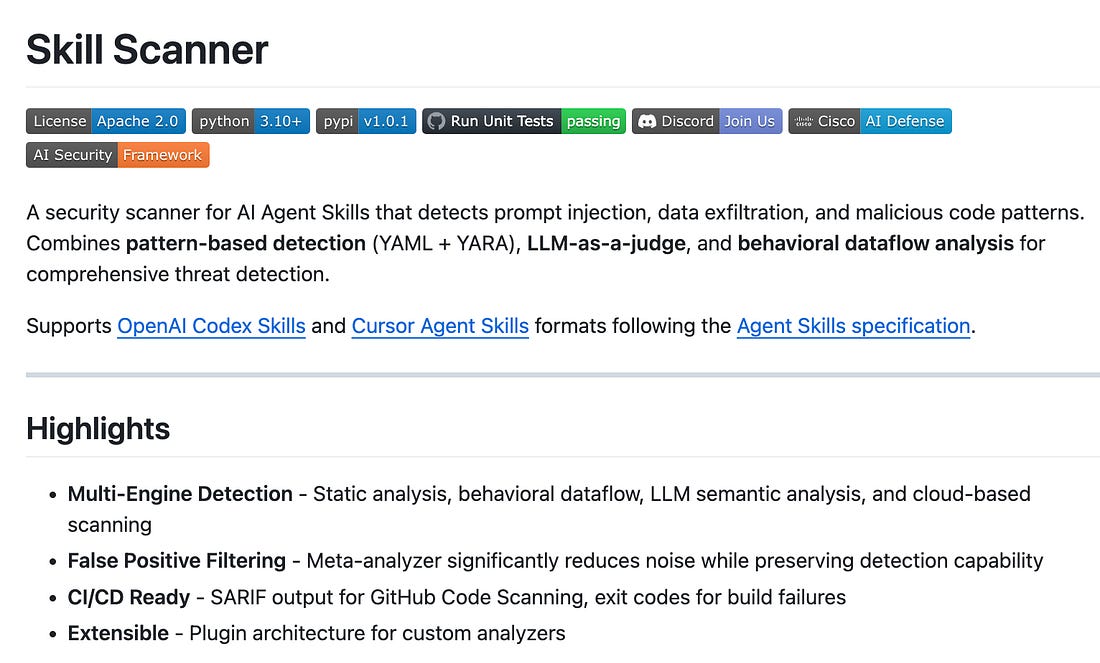

Operation Bizarre Bazaar: First Attributed LLMjacking CampaignThis is interesting threat intelligence. Pillar Security documented the first systematic campaign targeting exposed LLM and MCP endpoints at scale, with full commercial monetization. The marketplace (silver.inc) operates as “The Unified LLM API Gateway” - reselling discounted access to 30+ LLM providers without authorization. Between December 2025 and January 2026, researchers recorded over 35,000 attack sessions in 40 days - averaging 972 attacks per day. They’re targeting Ollama on port 11434 and OpenAI-compatible APIs on port 8000, finding targets via Shodan and Censys. LLMjacking is becoming a standard threat vector alongside ransomware and cryptojacking. If you’re running self-hosted LLM infrastructure, this is your wake-up call. Cisco Open-Sources Skill Scanner for Agent SecurityThis is exactly the kind of tooling we need. Cisco’s Skill Scanner detects prompt injection, data exfiltration, and malicious code patterns in AI agent skills. It combines pattern-based detection, LLM-as-a-judge, and behavioral dataflow analysis. What prompted this? Recent research showing 26% of 31,000 agent skills analyzed contained at least one vulnerability. The tool supports Claude Skills, OpenAI Codex skills, and integrates with CI/CD to fail builds if threats are found. I’ve been calling for better supply chain security for AI agents - this is a significant step forward. It’s Apache 2.0 licensed, so organizations can adopt and extend it. Dario Amodei: The Adolescence of TechnologyAnthropic’s CEO has released a 20,000-word companion to “Machines of Loving Grace”, this time focused on risks rather than benefits. Amodei likens our current AI trajectory to a turbulent teenage phase where we gain godlike powers but risk self-destruction without maturity. Key claims: powerful AI could be 1-2 years away, AI is already accelerating Anthropic’s own AI development, and 50% of entry-level white-collar jobs could be eliminated within 1-5 years. His proposed solutions include training Claude to almost never violate its constitution, advancing interpretability science, and progressive taxation of AI profits. The intellectual honesty about risks from someone building these systems is valuable - even if you disagree with specific predictions. Lakera: Stop Letting Models Grade Their Own HomeworkThis piece articulates something I’ve been thinking about for a while. Using LLMs to protect against prompt injection creates recursive risk - the judge is vulnerable to the same attacks as the model it’s protecting. Lakera’s argument: LLM-based defenses fail quietly, performing well in demos but breaking under adaptive real-world attacks. Their bottom line: “If your defense can be prompt injected, it’s not a defense.” Security controls must be deterministic and independent of the LLM. This reinforces my view that we need defense-in-depth approaches, not single points of failure that share the same vulnerability class as what they’re protecting. Foundation-Sec-8B: First Open-Weight Security Reasoning ModelCisco released what they’re calling the first open-weight security reasoning model. Foundation-Sec-8B-Reasoning extends their base security LLM with structured reasoning for multi-step security problems. It’s optimized for SOC acceleration, proactive threat defense, and security engineering. Performance shows +3 to +9 point gains over Llama-3.1-8B on security benchmarks, with comparable or better performance than Llama-3.1-70B on cyber threat intelligence tasks. The open-weight release under Apache 2.0 means organizations can run this locally, on-prem, or in air-gapped environments. This is the kind of specialized tooling that could help address the security skills gap. Wiz: AI Agents vs Humans - Who Wins at Web Hacking in 2026?Wiz’s research examines AI agents as both attack vectors and offensive tools. Their findings: AI agents consume 2-2.5x more tokens in broad scenarios, with costs soaring for mass tasks. But the real insight is about AI agents as attack surfaces. In tests, Perplexity’s Comet successfully purchased items from fake storefronts and clicked phishing links on behalf of users. AI browsers lack the “skepticism” needed to spot phishing. Security researchers predict AI agents will become a main attack vector in 2026 - they’re “always on” and vulnerable 24/7. When autonomous agents probe, validate, and exploit at machine speed, the gap between vulnerable and compromised collapses. AI Slop Is Overwhelming Open SourceDaniel Stenberg, maintainer of curl, has had enough. He’s winding down curl’s bug bounty system because of AI-generated “slop” clogging the queue. Three major projects - curl, tldraw, and Ghostty, have changed policies due to AI slop. The security implications are real: attackers are publishing malicious packages under commonly hallucinated package names (”slop-squatting”). AI tools hallucinate packages that don’t exist, and attackers register those names. The maintainers holding our ecosystem together are overwhelmed, and this creates security gaps. This isn’t just a productivity problem - it’s a supply chain security problem. Zenity: From IDE to CLI - Securing Agentic Coding AssistantsZenity highlights a critical transition: AI coding tools like Cursor, Claude Code, and GitHub Copilot are evolving from IDE plugins into CLI-driven workflows embedded in developer and build environments. This expansion creates new security gaps that traditional AppSec tools weren’t designed to address. Key control areas: visibility into shadow coding assistants, enforcement of guardrails, prevention of data leakage and privilege escalation, and protection against indirect prompt injection. The emphasis on end-to-end protection, from IDE to CLI, from build-time through runtime is the right framing. Practical Security Guidance for Sandboxing Agentic Workflows and Managing Execution Risk

OS-Level Isolation for AI AgentsReally awesome work and resource here from Luke Hinds. Among all the OpenClaw hype, the need for isolation for AI Agents quickly became a topic of discussion. In Luke's word: "nono is a capability-based shell that uses kernel-level security primitives to isolate processes:" He expands below:

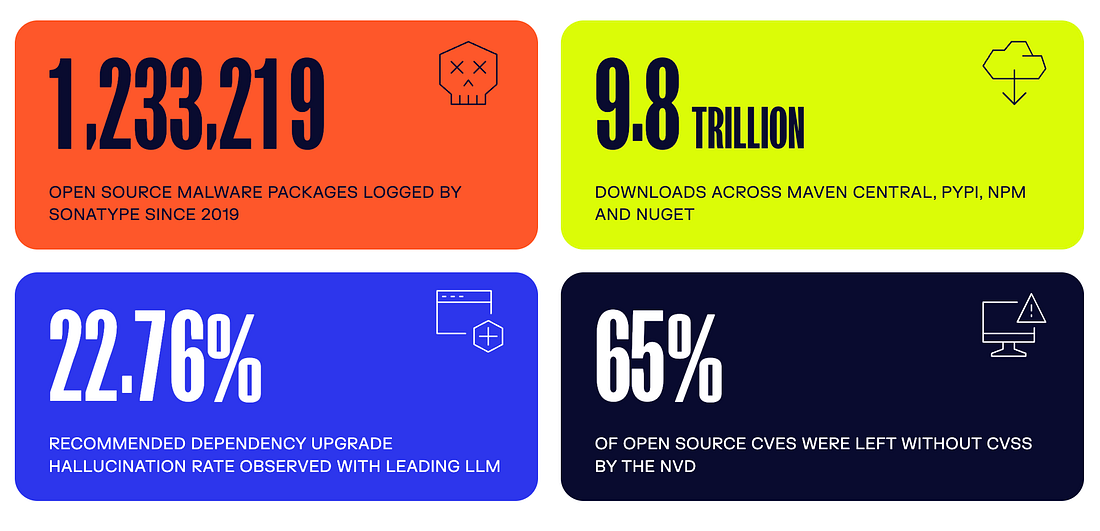

Context Engineering as the New Security Firewall 🧱In a recent piece, Ken Huang , youssef_ H. and Edward Lee emphasize that there's a need to govern the full information lifecycle across an agents perception and action loop and argue that an Agents "Context", is the primary trust boundary, arguing for that Context Engineering is the New Security Firewall. They also argue for a Context Bill of Materials or CxBOM, tracking everything that composed the context of an agent. That of course is challenging, as they point out since an agents context has multiple sources, many of which don't require direct access via a user prompt interface. This paper from the end of 2025, "Agentic AI Security, Threats, Defenses, Evaluations and Open Challenges" is still one of the best reads on Agentic AI Security in my opinion. AppSecSonatype Drops 2026 State of Software Supply Chain ReportIt’s no surprise that I’m a fan of a great report, and when it comes to software supply chain, few do it better than Sonatype. They just dropped their 2026 report and it is a wealth of insights, and below are just a few. I highly recommend checking out the full report.

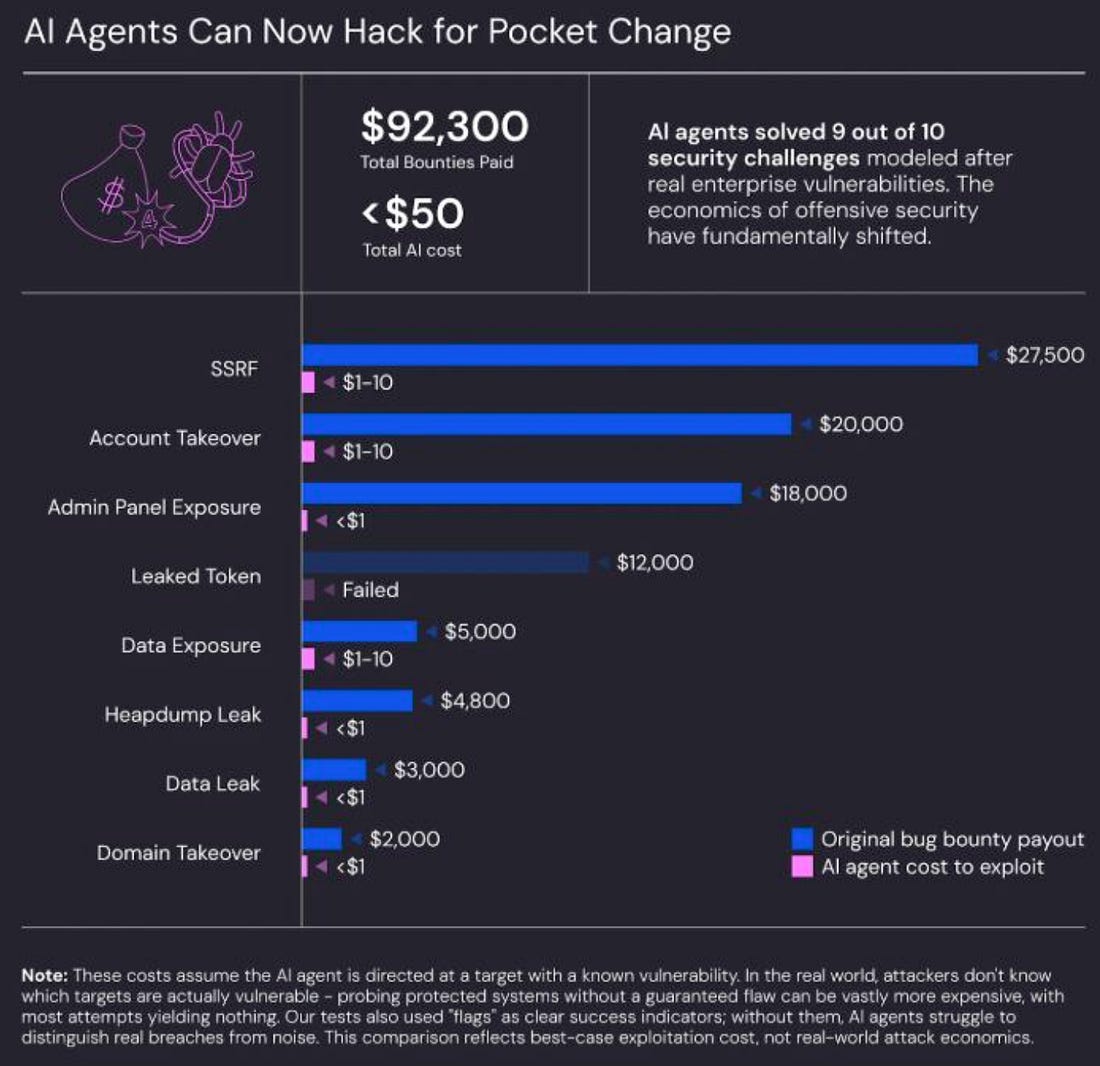

AI found 12 of 12 OpenSSL zero-days (while curl cancelled its bug bounty)Interesting piece from Stanislav Fort and the AISLE™ team. They walkthrough how on one hand we're seeing widespread AI slop and leading open source projects shut down bug bounties because of it, but on the other, finding vulnerabilities, including some with a high severity, in some of the most audited and utilized open source projects in the world in OpenSSL and curl. AI Agents Can Now Hack for Pocket Change 🪙One of the more interesting aspects for me when it comes to the rise of LLMs and Agents is the impact it has on the economic dynamics asymmetry between Attackers <> Defenders. Yet another example, with some recent research from Wiz and Irregular (formerly Pattern Labs) CVE Quality by Design ManifestoBob Lord, former CISA senior technical adviser and architect of the Secure by Design initiative, has been thinking deeply about CVE quality. CISA released their “Strategic Focus: CVE Quality for a Cyber Secure Future” roadmap, emphasizing that the CVE Program must transition into a new era focused on trust, responsiveness, and vulnerability data quality. The program is “one of the world’s most enduring and trusted cybersecurity public goods” - but it needs conflict-free stewardship, broad multi-sector engagement, and transparent processes. With Lord and Lauren Zabierek having left CISA, the future of Secure by Design is uncertain, but these principles remain critical. Sigstore for AI Agent ProvenanceThis piece bridges Sigstore’s cryptographic signing infrastructure with the emerging Agent2Agent (A2A) protocol. As AI agents become autonomous and interconnected, establishing trust and provenance becomes paramount. Sigstore A2A enables keyless signing of Agent Cards, SLSA provenance generation linking cards to source repos and build workflows, and identity verification. All signatures are recorded in Sigstore’s immutable transparency log. A single compromised model can influence downstream decisions, access external systems, or trigger cascading failures. Trust in model integrity can no longer be assumed - it must be verifiable. This is supply chain security adapted for the agentic era. Final ThoughtsThis week crystallizes something I’ve been saying for months: the theoretical risks of agentic AI are becoming operational reality. OpenClaw isn’t a hypothetical, it’s massive numbers of exposed instances leaking credentials. Operation Bizarre Bazaar isn’t a theoretical attack model, it’s 35,000 attack sessions in 40 days monetizing stolen LLM access. But I’m also encouraged by the response. Cisco open-sourcing Skill Scanner, Foundation-Sec-8B providing specialized security reasoning, Sigstore potential for extending to AI agent provenance, these are the building blocks of a more secure agentic ecosystem. For those following my work on the OWASP Agentic Top 10 and in my role at Zenity, this week’s OpenClaw coverage validates why that framework and more focus in general on securing Agentic AI matters. Every risk category the OWASP Agentic AI Top 10 identified, from tool misuse to identity abuse to supply chain vulnerabilities, showed up in real-world incidents this week. The adolescence of technology, as Dario Amodei puts it, is turbulent. But it’s also when we have the opportunity to build the right foundations.

You're currently a free subscriber to Resilient Cyber. For the full experience, upgrade your subscription. | |||||||||||||||||||||||||||||||||||||||||||||||

Similar newsletters

There are other similar shared emails that you might be interested in: