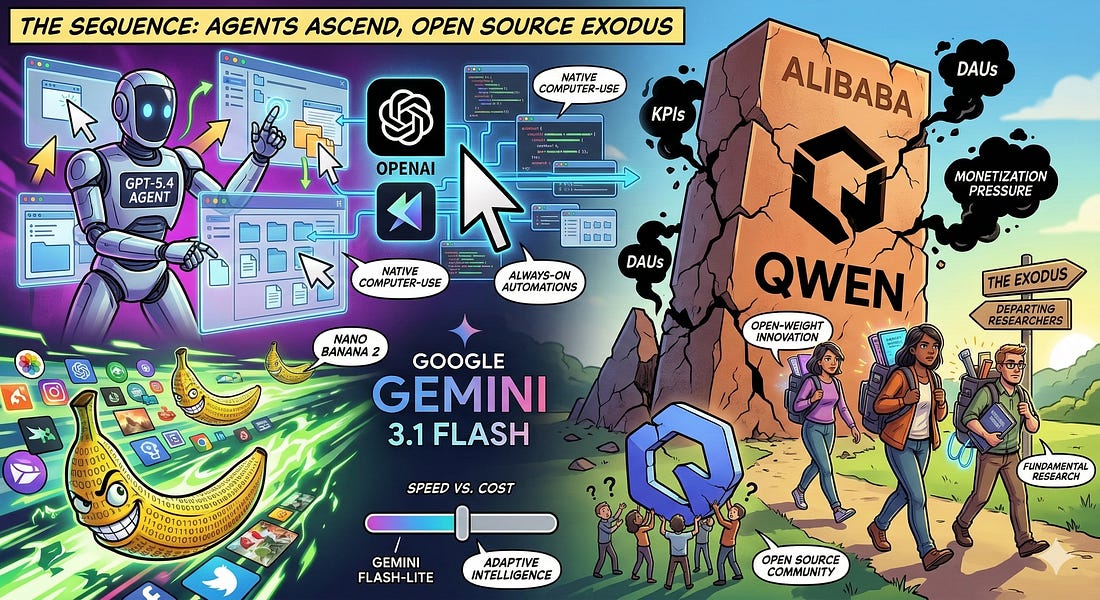

The Sequence Radar #820: GPT-5.4, Cursor, and the New Desktop

Was this email forwarded to you? Sign up here The Sequence Radar #820: GPT-5.4, Cursor, and the New DesktopA week of massive developments in agentic AI.Next Week in The Sequence:

Subscribe and don’t miss out:📝 Editorial: When AI Takes the Mouse: GPT-5.4, Cursor, and the New DesktopWelcome to this week’s edition of The Sequence. The contrast between algorithmic triumph and organizational fragility couldn’t be starker right now. While proprietary AI models are crossing the Rubicon into autonomous, always-on digital workers, the human engines driving open-weight breakthroughs are colliding head-first with corporate bureaucracy. Here is what you need to know about this week’s seismic shifts. OpenAI & Cursor: The Agentic DesktopOpenAI just redefined the frontier with the release of GPT-5.4 and its reasoning-focused counterpart, GPT-5.4 Thinking. The headline isn’t just better benchmarks; it’s native computer-use capability. GPT-5.4 can interact directly with software interfaces—interpreting screenshots, clicking, scrolling, and executing multi-step workflows across native desktop applications. Supporting a 1-million token context window, it represents a monumental step toward reliable agents capable of navigating the unstructured environments of modern knowledge work. This agentic shift is equally explosive in the developer ecosystem. Cursor just unveiled Automations, a system designed to manage always-on coding agents. Instead of waiting for a developer’s prompt, Cursor’s agents are now triggered asynchronously by code changes, PagerDuty incidents, or scheduled timers. They operate autonomously in the background—reviewing pull requests, investigating server logs, and running exhaustive test suites. The paradigm is officially shifting from AI as an interactive copilot to AI as an asynchronous, parallel coworker. Google’s Need for Speed: Gemini FlashGoogle, meanwhile, is optimizing for sheer velocity and cost-efficiency. They launched Gemini 3.1 Flash-Lite in preview, delivering frontier-class reasoning at a fraction of the compute cost—ideal for high-volume, low-latency tasks. Complementing this text model is the release of Nano Banana 2, officially designated as Gemini 3.1 Flash Image. Google has successfully mapped the high-speed intelligence of its Flash architecture onto visual generation. Nano Banana 2 merges the complex prompt adherence and precise text-rendering of previous “Pro” models with lightning-fast inference. Rolling out as the default across the Gemini app and Google Workspace, it allows developers to generate production-ready assets and execute rapid, iterative image editing at unparalleled speeds. The Qwen Exodus: A Cautionary TaleBut while proprietary giants accelerate, the open-weight ecosystem just suffered a devastating blow. Less than 24 hours after releasing the highly praised, intelligence-dense Qwen 3.5 small models, Alibaba’s core AI team began to disintegrate. Technical lead Junyang Lin, alongside key researchers like Binyuan Hui and Kaixin Li, abruptly resigned. Insiders report a textbook clash of paradigms: Alibaba is breaking up the vertically integrated research team into horizontally segmented, KPI-driven units desperate to boost Daily Active Users (DAUs). Replacing a core fundamental research mandate with metrics meant to aggressively monetize consumer chatbots has alienated the talent that drove Qwen past 600 million downloads. If Alibaba pivots fully to closed monetization, the community loses one of its most vital engines of open-source innovation. 🔎 AI ResearchBeyond Language Modeling: An Exploration of Multimodal PretrainingAI Lab: FAIR, Meta, and New York University Summary: This paper investigates unified multimodal pretraining from scratch using the Transfusion framework to jointly learn language and vision capabilities. Key findings include the identification of Representation Autoencoders as an optimal visual representation and the discovery that vision is significantly more data-hungry than language. Qwen3-Coder-Next Technical ReportAI Lab: Qwen Team (Alibaba) Summary: The report introduces Qwen3-Coder-Next, an 80-billion-parameter Mixture-of-Experts model specialized for coding agents that activates only 3 billion parameters during inference. The model achieves strong performance on coding benchmarks by leveraging large-scale agentic training with verifiable tasks paired with executable environments. DREAM: Where Visual Understanding Meets Text-to-Image GenerationAI Lab: MIT CSAIL and Meta AI Summary: The researchers present DREAM, a unified framework that jointly optimizes discriminative and generative objectives to improve both visual understanding and text-to-image generation. The system utilizes a progressive masking schedule called Masking Warmup and a novel Semantically Aligned Decoding strategy to achieve high-fidelity image synthesis. KARL: Knowledge Agents via Reinforcement LearningAI Lab: Databricks AI Research Summary: This paper introduces KARL, a system for training enterprise search agents using reinforcement learning to achieve state-of-the-art performance on complex, grounded reasoning tasks. By employing a multi-task off-policy RL paradigm and an agentic synthesis pipeline, the model demonstrates superior cost-efficiency and generalization across diverse search regimes compared to leading closed models. Agentic Code ReasoningAI Lab: Meta Summary: This study explores “agentic code reasoning,” which allows LLM agents to navigate codebases and perform deep semantic analysis without executing any code. The authors introduce semi-formal reasoning, a structured prompting methodology that uses templates to significantly improve accuracy on tasks like patch equivalence and fault localization. Bayesian Teaching Enables Probabilistic Reasoning in Large Language ModelsAI Lab: Massachusetts Institute of Technology, Meta, Google DeepMind, and Google Research Summary: This research identifies that off-the-shelf large language models (LLMs) struggle with updating probabilistic beliefs during multi-round interactions, often plateauing after a single exchange. By using "Bayesian teaching"—fine-tuning LLMs to mimic the predictions of a normative Bayesian model—researchers significantly improved the models' ability to adapt to user preferences and demonstrated that these reasoning skills generalize to entirely new tasks. 🤖 AI Tech ReleasesGPT-5.4OpenAI released GPT-5.4 in ChatGPT, the API and Codex with incredibly impressive results. Gemini 3.1 Flash-LiteGoogle released Gemini 3.1 Flash-Lite, a version of its latest model optimized for high volume workloads. Cursor AutomationsCursor unveiled Automations, its most aggresive agentic release to date. Qwen 3.5 SmallAlibaba Qwen released a series of small versions or its new 3.5 model. Phi-4 Reasoning VisionMicrosoft released Phi-4-reasoning-vision-15B, a multimodal reasoning vision model. 📡AI News You Need to Know About

You’re on the free list for TheSequence Scope and TheSequence Chat. For the full experience, become a paying subscriber to TheSequence Edge. Trusted by thousands of subscribers from the leading AI labs and universities. |

Similar newsletters

There are other similar shared emails that you might be interested in: